Prefer to listen instead? Here’s the podcast version of this article.

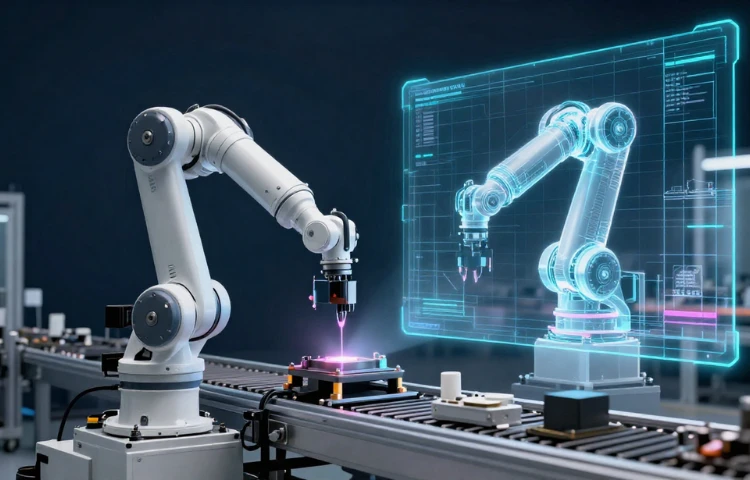

ABB Robotics NVIDIA Omniverse is one of the most important factory tech pairings to watch in 2026 because it targets the biggest pain point in industrial automation, the gap between what works in simulation and what survives the real world. By embedding NVIDIA Omniverse libraries into ABB RobotStudio, ABB is pushing beyond basic digital twins toward hyper realistic, physics accurate environments where robots, sensors, lighting, materials, and motion behave like the production floor.

This matters because physical AI lives or dies on reliability. A vision model that performs beautifully in a clean lab can stumble in a plant filled with glare, shadows, reflective metals, and constant variation. With Omniverse powered simulation and a controller stack aligned to real robot behavior, ABB is aiming to help teams validate automation cells faster, train models using synthetic data, and scale deployments with fewer surprises during commissioning.

On March 9, 2026, ABB Robotics and NVIDIA announced a partnership that plugs NVIDIA Omniverse libraries directly into ABB RobotStudio, the programming and simulation platform used by tens of thousands of robotics engineers worldwide. The goal is simple but massive: make simulation so physically accurate that what you validate in a digital twin behaves the same way on the factory floor, at scale. Source overview and details are covered in NVIDIA’s official post here: [NVIDIA Blog]

That one step tackles the biggest hidden tax in industrial automation: the sim to real gap. Lighting changes, reflective parts, sensor noise, slippery materials, small tolerances, and real world variation have a habit of humiliating clean simulations. ABB and NVIDIA are claiming up to 99 percent correlation between simulation and real world behavior when the virtual controller runs the same firmware as the physical robot, which is the kind of number that makes manufacturing leaders stop budgeting for endless physical retesting.

ABB’s new capability is branded as RobotStudio HyperReality, planned for release in the second half of 2026. It is designed to let teams design, program, test, and validate an automation cell before a single robot is installed. According to the announcement, expected impacts include lower deployment costs and faster time to market, driven by reduced commissioning and fewer physical prototypes.

Here is the core loop that makes this more than a shiny demo:

ABB exports the full robot station into Omniverse using USD

That includes robots, sensors, lighting, kinematics, and parts as a fully parameterized station. Omniverse is built to simulate physics and render photorealistic scenes, which is essential for vision based robotics.

A virtual controller runs the same brain as the real robot

When the controller firmware matches what runs in production, you reduce “controller drift” between the digital twin and the real line. This is a big reason the partnership claims near real world correlation.

Synthetic data becomes a first class manufacturing input

Omniverse can generate synthetic images for training vision models, so teams can train and validate perception without waiting on perfect labeled datasets from the floor. That matters for high mix production where parts change constantly.

A robotics industry perspective with clear practical implications is covered by The Robot Report here. [The Robot Report]

ABB says early pilots include Foxconn in consumer electronics assembly and Workr focused on faster deployment for small and mid size manufacturers. If you want a quick read specifically on Foxconn’s pilot angle, this summary is solid. [Evertiq]

The interesting thread here is not just speed. It is repeatability. Electronics assembly is ruthless: reflective metals, tight tolerances, and frequent product refreshes. If a digital twin can reliably predict behavior, you can move from “custom robotics project” to “repeatable rollout playbook.”

Physical AI is not “AI in a dashboard.” It is AI that perceives the world, reasons under uncertainty, and acts through machines that can cause real outcomes. Scaling that safely requires more than a model. It requires a pipeline:

As robots get more autonomous and AI driven, regulators increasingly treat them as safety relevant systems, not just productivity tools.

If you are evaluating ABB Robotics NVIDIA Omniverse capabilities, here is a grounded way to prepare without buying into hype cycles:

ABB Robotics NVIDIA Omniverse is not just a shiny partnership headline, it is a blueprint for how physical AI becomes practical in real factories. When simulation reaches near real world fidelity, teams can design, test, and validate automation cells before equipment arrives, generate synthetic data that actually reflects shop floor conditions, and reduce the expensive trial and error that slows deployments. That is how industrial grade physical AI moves from isolated pilots to repeatable rollouts across sites, lines, and product variants.

The bigger win is confidence. Better correlation between digital twins and reality means fewer surprises during commissioning, faster changeovers when products evolve, and a clearer path to scaling vision driven robotics without constantly rebuilding datasets from scratch. For manufacturers, integrators, and operations leaders, the takeaway is straightforward: treat simulation, data, and governance as core production assets, not side projects.

WEBINAR